Application essays are high-stakes writing. They’re used by colleges, graduate programs, and professional schools to assess a candidate’s communication skills, personal experiences, and fit for the institution. Unlike academic papers that follow strict formatting rules, application essays are personal narratives that require authenticity and individual voice. The rise of AI writing tools has created new concerns for admissions committees trying to identify applicants who’ve used ChatGPT or similar models to write their essays.

AI detectors face unique challenges with application essays. Personal writing varies widely in style, tone, and structure. Some applicants write in polished, formal prose, while others use conversational language and informal storytelling. Some essays follow traditional narrative structures while others experiment with format. This variability makes it harder for detectors to establish a baseline for what “AI-generated” looks like. False positives are especially concerning in admissions contexts because they can unfairly disqualify qualified candidates who happen to write clearly or who’ve received extensive editing help.

This guide evaluates AI detectors based on their ability to handle personal narrative writing and their appropriateness for admissions evaluation.

Why Application Essays Are Difficult to Detect

Personal stories are difficult to detect due to the subjective nature of “voice”. Each person expresses themselves uniquely. Many students naturally express themselves in a clear, organized way. These types of writing can lead to false positives. Other students will express themselves in a fragmented way or through experimentation. Although these types of writing are clearly written by a human, they may confuse algorithms trained to detect writing that follows standard formats.

Another difficulty lies in the fact that application essays are written and rewritten. Students receive input from teachers, counselors, family members, and professional editors. The collaboration process may cause the essay to become more polished and consistent. Detectors trained to recognize formal writing structures may interpret the uniformity of an essay as AI-generated. An essay that underwent 10 drafts and had extensive editing may trigger a high detection score despite being a product of the student’s effort.

Finally, application essays come in many forms. Some follow a traditional narrative arc (beginning, middle, and end) while others include non-traditional formats such as lists, vignettes, and dialogues. Detectors trained to analyze writing in traditional formats may not recognize creative formats and may misinterpret the format as highly human or highly AI-generated, depending on how much it deviates from typical formats.

What Admissions Committees Should Consider

Admissions committees should not rely solely on the results of an AI detector when reviewing an application essay. Application essays are far too personal and diverse to be evaluated based solely on a detection value. If an admissions committee uses an AI detector as part of the evaluation process, a high detection value should be viewed as a cue to examine the essay further rather than as conclusive evidence that the essay was generated by AI.

Consider whether the sections of the essay that triggered the detection value sound unnatural or inconsistent with the rest of the application. Also consider whether the essay appears to match the applicant’s transcript, letters of recommendation, and interview performance. Reviewers should compare the voice of the applicant throughout the entire application rather than relying on a single detection value.

Extensive editing of an essay is normal and expected. Polished writing is not indicative of AI-generated writing. The intent is to identify essays that do not represent the applicant’s genuine voice and experiences, not to punish applicants for having written well or for having revised their essays extensively.

Ultimate AI Detectors for Application Essays

Proofademic

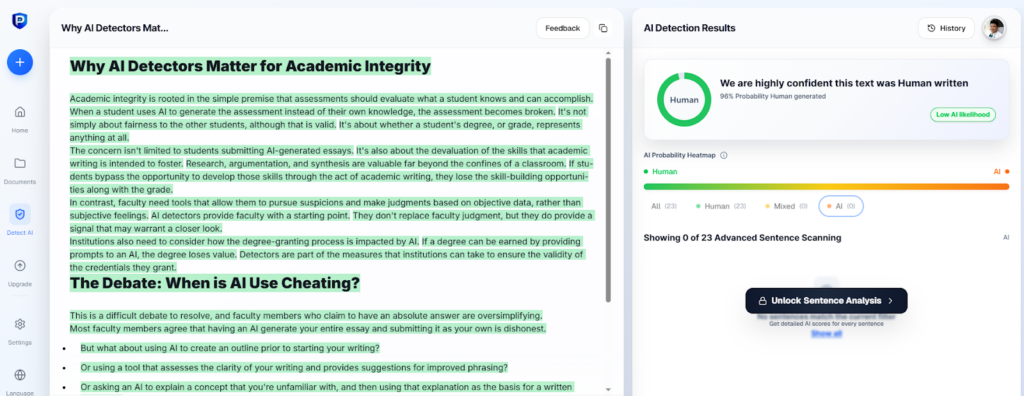

Although Proofademic was developed for academic purposes, it performs better than many general-purpose detectors when analyzing personal narrative writing. Proofademic analyzes sentences within an essay and identifies areas of the essay that are likely to be generated by AI. This allows admissions committees to determine where to concentrate their attention. The tool was trained to recognize formal writing structures; however, it has demonstrated flexibility in dealing with a variety of writing styles.

When testing Proofademic using application essays that included both formal and informal writing, the tool provided good results. When presented with clearly AI-generated essays, the tool identified them well. On human-generated essays that had undergone many revisions, Proofademic demonstrated self-control and did not raise significant red flags. The sentence-by-sentence analysis allowed us to identify the exact portions of the essay that led to the score, and the tool analyzed emotionally charged and personally oriented language better than detectors that were developed to analyze technical or academic content.

Proofademic is particularly beneficial to admissions committees that prefer to review specific sections of an essay that have raised red flags, rather than relying on a single percentage. Additionally, Proofademic is beneficial to students wishing to review their essays prior to submission.

Proofademic offers free access to basic detection capabilities. Premium services are offered for institutions. Proofademic is best for evaluating application essays for admission to colleges and graduate schools, where authenticity of voice and personal experiences are important.

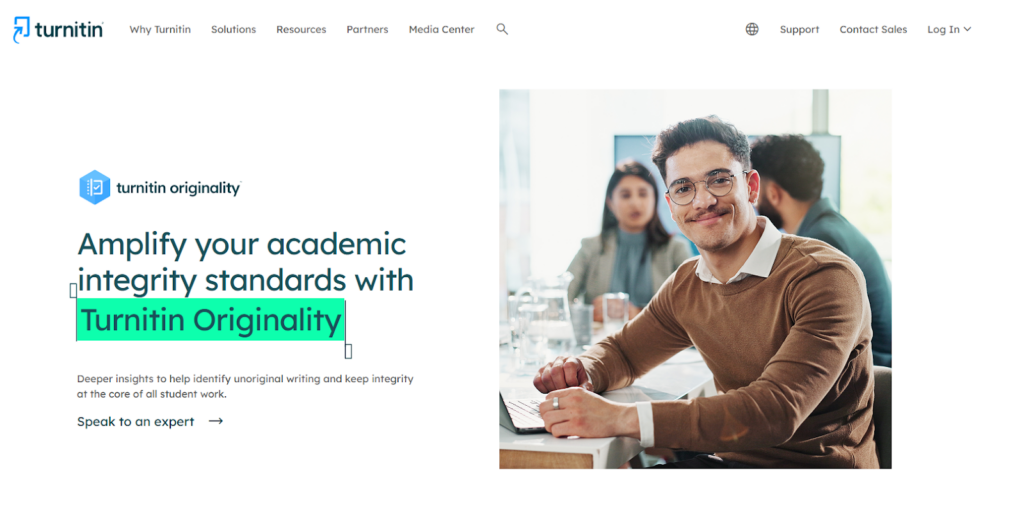

Turnitin

Many institutions of higher learning use Turnitin to detect plagiarism and have some form of access to the AI detector. AI detection provides a percentage value that can be evaluated alongside other aspects of the application. Turnitin is a name that many institutions trust and has a history of detecting plagiarism.

However, Turnitin does not provide feedback at the sentence level. Therefore, it is more challenging to thoroughly evaluate an application essay. During testing, Turnitin incorrectly identified human-generated essays that used clear and organized narrative structures as being heavily generated by AI. Furthermore, Turnitin had difficulties with essays that had been extensively edited, and in some cases, interpreted the polish of the essay as evidence of AI-generated writing. Turnitin is best used as a screening tool rather than as the definitive method of determining if an essay was generated by AI.

Turnitin is a subscription service and is usually available to institutions. It is most effective when used by reviewers who have knowledge of how to effectively interpret the output of the AI detector.

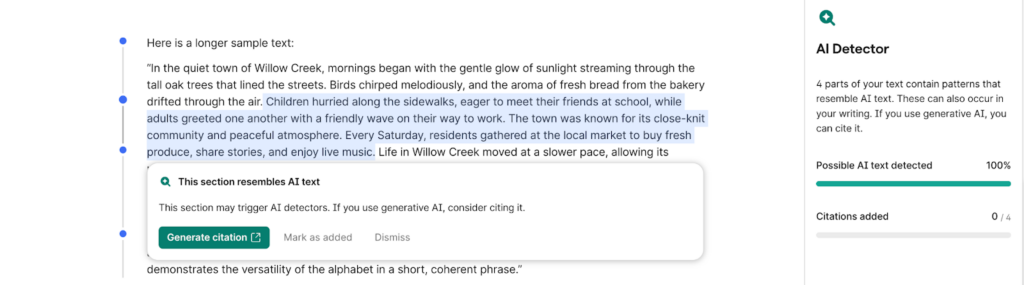

Grammarly AI Detector

Grammarly’s AI detector is free and can be accessed without signing up. It provides a percentage value and is very user-friendly for quick reviews. The tool processes essays extremely rapidly.

However, Grammarly’s AI detector tends to flag as AI-generated essays that are polished and written in a clear and organized manner. During testing, Grammarly’s AI detector frequently reported high values indicating AI generation for human-generated application essays that had been carefully revised and edited. This is problematic for application essays, as students are encouraged to revise their essays extensively and with multiple sources of feedback. Grammarly’s AI detector provides no explanation for the percentage value; therefore, users cannot identify which sections of the essay contributed to the value.

Grammarly’s AI detector is free and located on Grammarly’s website. It is best used as a quick screening device and should not be relied upon for making admissions decisions.

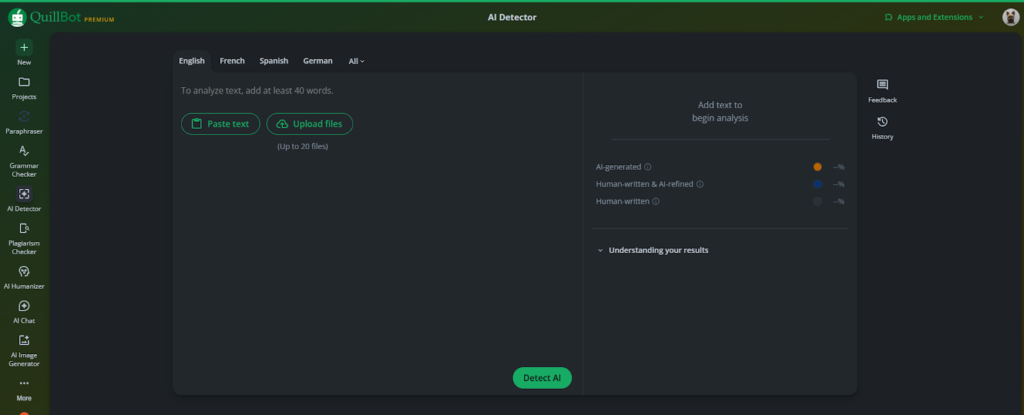

Quillbot AI Detector

Quillbot’s AI detector provides free access with no limits on the number of words processed. The tool is rapid and does not require an account. It provides a simple percentage value.

During testing, Quillbot’s AI detector provided mixed results on application essays. It identified AI-generated content fairly accurately. However, it also identified human-generated essays that used polished writing and clear structures with high percentages. The tool does not perform sentence-by-sentence analysis. It is suitable for rapid screening, but is not ideal for being used as the primary tool for reviewing application essays for admissions.

Quillbot’s AI detector is free and is available without creating an account.

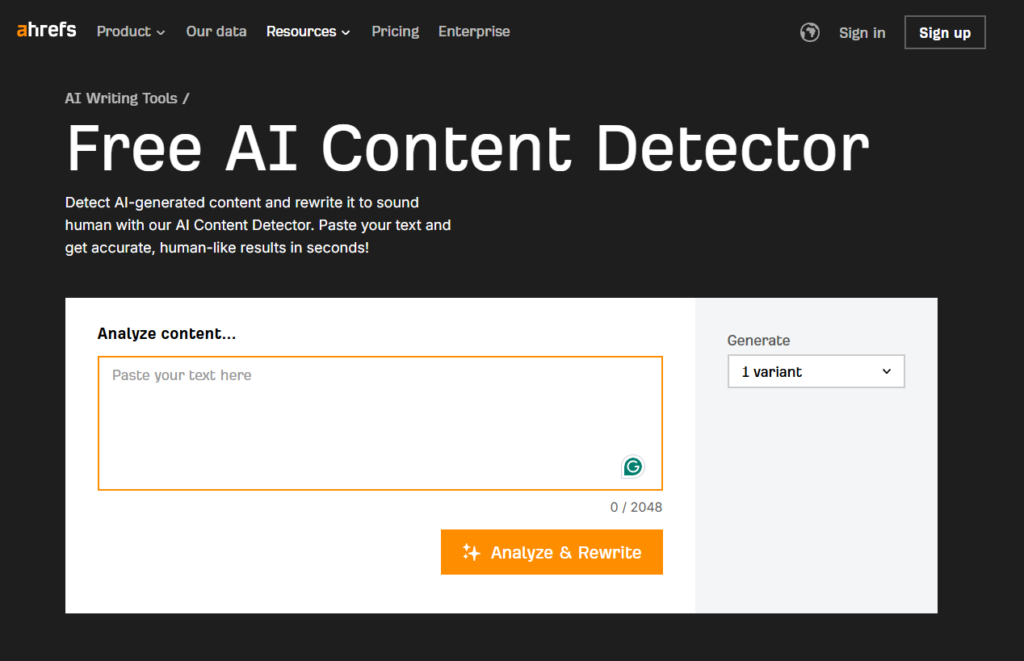

Ahrefs AI Detector

Ahrefs provides a free AI detection tool that supplies a percentage value and highlights sections of text that are likely to be generated by AI. Highlighting is superior to simply providing a percentage value because it allows reviewers to identify which specific sections of the essay triggered the value.

Ahrefs performed adequately when analyzing clearly AI-generated application essays. However, Ahrefs also identified polished personal narrative applications as high-risk in several instances. The highlighting function was helpful for identifying specific sections of the essay that were problematic, but Ahrefs did not provide explanations for why those sections triggered the value.

Ahrefs’ AI detector is free and is available on Ahrefs’ website.

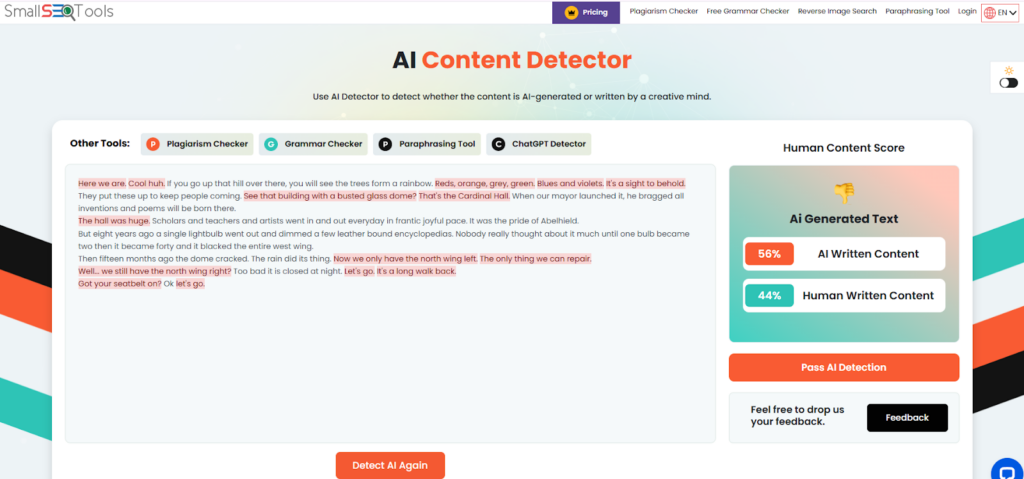

Small SEO Tools AI Detector

Small SEO Tools provides a free AI detection tool that requires no registration. The tool produces a percentage value immediately.

However, during testing, Small SEO Tools produced inconsistent results. At times, Small SEO Tools incorrectly identified human-generated application essays as being heavily AI-generated. The incorrect identifications were most common when the writing was clear and organized. The tool does not provide a breakdown or explanations for the results.

The detector is free and is available on the Small SEO Tools website. It is best for casual use.

Testing Methodology

We tested each detector using application essays from college, graduate school, and professional school applicants. We used human-written essays, AI-generated versions of similar personal narratives, and hybrid essays that combined both. We focused on how each tool handled personal storytelling, emotional language, and informal tone, all of which differ from academic writing. We also tested essays that had been edited or revised multiple times, since application essays typically go through extensive review. Testing occurred between December 2025 and January 2026.

Limitations of Detecting AI Through Probabilistic Tools

Probabilistic tools aren’t definitive authorship identification tools, so AI detector tools aren’t definitive identification tools. Probabilistic tools can identify patterns of writing and provide probability estimates as to whether an individual piece of writing was produced by AI, created by a human, or created through a combination of both types of production processes. However, it’s impossible for these tools to determine with absolute certainty whether a particular piece of writing was produced by a computer or a human being.

The detection accuracy of these tools varies significantly. A student may submit the exact same essay and receive vastly different detection scores from each tool utilized. The results of any single tool can’t be relied upon.

In addition, models have been developed based on training data sets that may not reflect all the various forms of human writing styles, especially those forms that are typically present in application essays, such as personal, informal, and emotive language.

False positive detection is a significant problem in admissions settings. Students who write clearly, have organized their ideas, or have revised their work excessively are far more likely to be identified as having used AI tools than they are to have actually done so. This is due to the fact that the writing style of students identified as using AI tools is often characterized by surface features of writing that are also characteristic of writing generated by computers (such as organization, grammar, and clarity).

The use of AI detector tools can’t provide an admissions committee with information about the complete context surrounding a student’s application. These tools analyze text in isolation and don’t take into consideration the student’s background, their voice as evidenced by their other application materials, or the circumstances surrounding the writing of the essay. A detection score generated by an AI detector tool would be essentially meaningless to an admissions committee.

Finally, the writing capabilities of AI writing tools are continually changing at a rapid pace. If a detector tool is trained on the output of older AI writing tools, it’s possible that the tool won’t recognize output from newer versions of the same tool, and vice versa. There exists a continuing reliability gap that has yet to be resolved.

Frequently Asked Questions

Can AI detectors definitively prove an application essay was written using ChatGPT?

No. AI detectors base their estimates on patterns, but they cannot definitively determine authorship. Because application essays are personal and vary widely, detection is unreliable compared to academic contexts.

Why would a human-generated application essay be identified as AI-generated?

Polished writing and organized writing are often flagged as AI-generated. Revisions to application essays are a normal part of the application process. Application essays that have been heavily revised and edited are often uniformly formatted and structured. Detectors are programmed to recognize uniform formatting and structure as indicative of AI-generated writing. Students who naturally write in a clear and organized fashion are also at greater risk of false positives.

Are there any benefits to using AI detectors to evaluate application essays for colleges?

AI detectors can be used as a single screening tool among many other tools used by admissions committees. However, AI detectors should never be the only factor considered when making admissions decisions. Admissions committees should use high detection values as an indicator of potential AI-generated content to review the essay further. The admissions committee should then consider the overall application, including the applicant’s transcript, letters of recommendation, and interview performance, to ensure that the applicant’s voice and experiences are represented consistently throughout the application.

Do different AI detectors report different values for the same application essay?

Yes. Different AI detectors utilize different models and may report differing results on the same essay. This disparity is another reason why detection values should not be relied on as the sole determination for the legitimacy of an application essay.

Can students use AI detectors to check their essays before submitting them?

Yes, many students do this. However, students should be aware of the dangers of revising their essays excessively based on the findings of an AI detector. The objective is to write authentically and tell your own story, not to trick the detector into reporting low values.

Which is the best AI detector for college application essays?

Proofademic analyzes personal narrative writing better than many general-purpose detectors and provides sentence-by-sentence analysis. For institutional use, Turnitin is widely available, although it does not provide detailed analysis.

What should admissions committees do when receiving a high detection value?

High detection values should be a cue for the admissions committee to review the essay further and compare it to other components of the application. The committee should evaluate whether the voice of the applicant is consistent throughout the essay and other application materials, and whether the essay sounds natural and aligned with the applicant’s experiences and credentials.

Is extensive editing of an application essay evidence of AI-generated content?

No. As stated above, application essays are expected to be revised extensively and professionally edited. Clarity and polish of an essay are not evidence of AI-generated content. Instead, admissions committees should focus on whether the essay represents the applicant’s genuine voice and experiences, and not whether the essay is well-written.